How Does Valley Scale Linkedin Outreach Without Sacrificing Personalization Quality?

Try Valley

Make LinkedIn your Greatest Revenue Channel ↓

Saniya

Why Traditional Personalization Doesn't Scale:

The traditional trade-off in outbound sales forces a choice: high-volume generic outreach with low response rates, or low-volume personalized outreach with high response rates but limited pipeline. Valley eliminates this trade-off through AI-powered personalization that maintains message quality while scaling to hundreds of prospects monthly.

Manual personalization creates unsustainable bottlenecks:

Time Investment Per Prospect:

Researching one prospect thoroughly requires: 10-15 minutes reviewing LinkedIn profile, experience, recent activity, 5-10 minutes investigating company, recent news, growth stage, 5-10 minutes identifying relevant pain points and talking points, 5-10 minutes crafting personalized message incorporating research, and 2-3 minutes proofreading and sending.

Total: 25-45 minutes per prospect for truly personalized outreach.

Volume Limitations:

At 30 minutes per prospect: 2 prospects per hour maximum, 16 prospects per day (8-hour workday), 80 prospects per week, and 320 prospects per month (one person full-time).

For teams needing to engage 1,000+ prospects monthly, manual personalization requires 3+ full-time resources doing nothing but research and writing.

Quality Degradation Under Pressure:

When volume demands increase: research depth decreases (skimming vs. thorough analysis), personalization becomes superficial (name/company only), message quality declines (fatigue, shortcuts), and errors increase (wrong details, outdated information).

The Generic Alternative's Failure:

Scaling through templated messages: 1-2% response rate (vs. 10-15% for personalized), poor meeting quality (curiosity seekers not serious buyers), damaged sender reputation (spam perception), and wasted capacity on low-probability conversations.

How Valley Achieves Personalization at Scale:

Valley's architecture automates time-intensive personalization work while maintaining quality:

Automated Research Pipeline:

For every prospect, Valley automatically: enriches LinkedIn profile data (pulls current title, company, experience), researches company intelligence (size, industry, funding, news), analyzes LinkedIn activity (posts, engagement patterns, interests), identifies trigger events (job changes, company announcements, funding), correlates behavioral signals (why they're in campaign—profile view, post engagement), and synthesizes personalization insights (specific talking points for this prospect).

This comprehensive research completes in 30-60 seconds per prospect—100x faster than manual.

AI-Generated Contextual Messages:

Valley's 7-LLM architecture generates personalized messages: references specific signal that triggered outreach ("I noticed you viewed my profile 3 times this week"), incorporates company-specific research ("Based on [Company]'s recent Series B and expansion into [geography]"), acknowledges prospect's role and challenges ("Given your background leading sales ops at [previous company], you've probably experienced [pain point]"), and uses natural language matching your voice (trained on your writing patterns).

Each message uniquely generated, never templates with mail-merge fields.

Scalable Quality Control:

Valley maintains quality through: AI confidence scoring (flags low-quality generations for review), human-in-loop approval (review messages before sending when needed), continuous learning (improves from every edit and approval), and quality metrics tracking (monitors response rates as quality indicator).

Batch Processing at Individual Quality:

Valley processes hundreds of prospects simultaneously: research runs in parallel (not sequential manual effort), AI generates messages concurrently (not one-at-a-time writing), quality checks automated (not manual review of each), and delivery scheduled optimally (timezone-aware, timing-optimized).

Result: 100-200 personalized messages prepared in time it takes humans to research 5-10 prospects.

► Book a demo and explore how Valley can support your use case

How Valley's Personalization Depth Compares to Manual:

Automated doesn't mean superficial. Valley's research often exceeds manual depth:

Data Source Coverage:

Manual research typically covers: LinkedIn profile review, company website visit, maybe Google news search, and perhaps one or two additional sources.

Total: 4-6 data sources, 10-15 minutes.

Valley automated research covers: LinkedIn profile and activity, company website and blog, news articles and press releases, funding databases (Crunchbase, PitchBook), technology stack (BuiltWith, similar), job postings (growth signals), social media, industry publications, and public financial data when available.

Total: 25+ data sources, 30-60 seconds.

Research Consistency:

Manual research quality varies: experienced reps research better than junior reps, morning research more thorough than 5 PM (fatigue), important prospects researched deeply, less important skimmed, and subjective judgment affects what's "relevant."

Valley research is identical for every prospect: same sources consulted, same depth of analysis, no fatigue or mood variation, consistent quality regardless of prospect priority, and objective criteria determine relevance.

Research Recency:

Manual research is point-in-time: reflects information available when researcher looked, becomes outdated quickly (yesterday's news doesn't appear), and stale by time message sent if delay occurs.

Valley research is continuous: checks for new information before every outreach, incorporates news from past 24-48 hours, updates when prospect signals recur, and always current as of message send time.

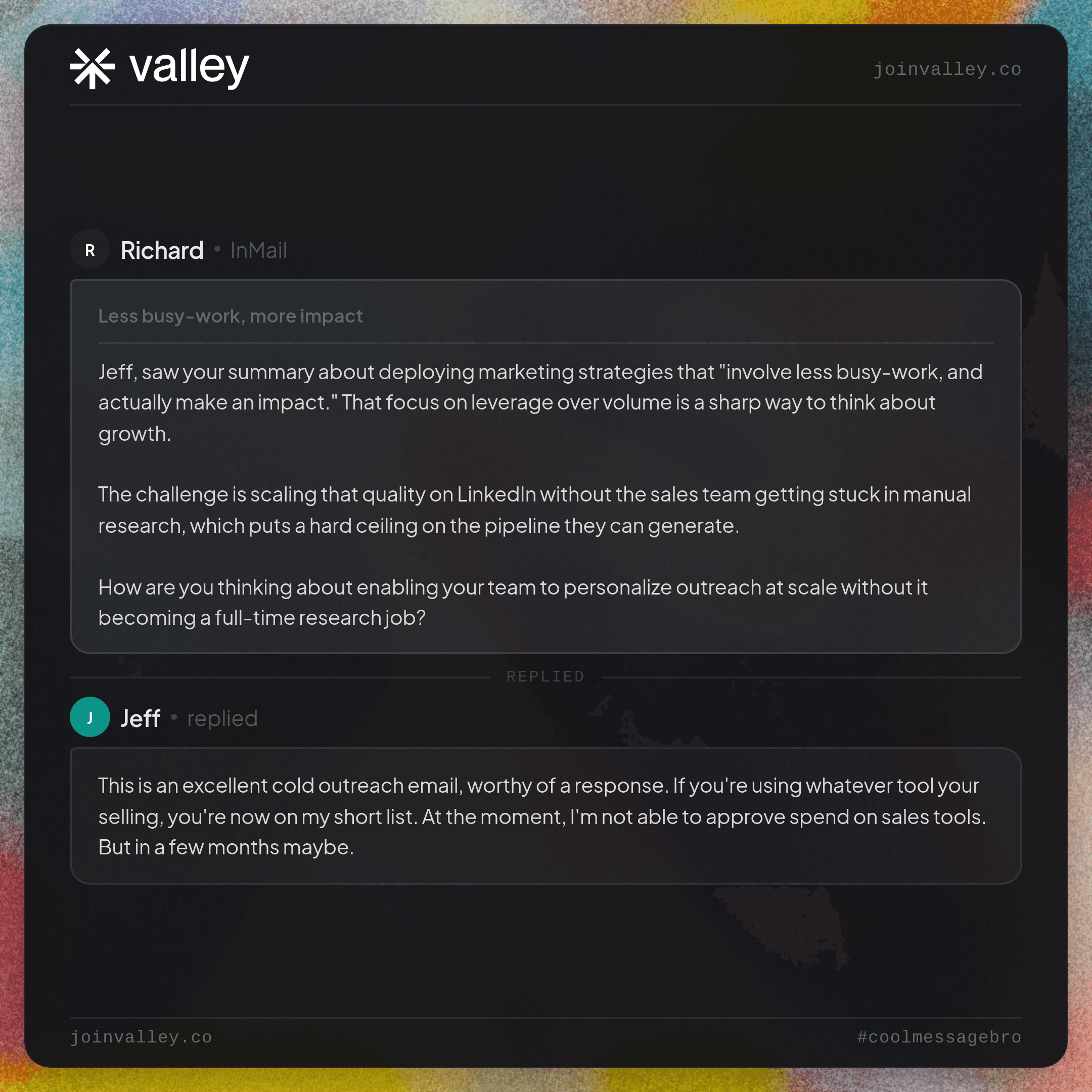

► Check Out More of Valley's Incredible Outreach: A compilation of real time messages and responses!

How Valley Balances Personalization Depth with Volume Goals:

Different campaigns warrant different personalization levels:

Tiered Personalization Strategy:

Valley enables sophisticated tiering:

Tier 1 - Deep Personalization (Best-Fit Prospects, High Signals):

For prospects scoring 80-100 on ICP fit with strong signals: maximum research depth (all 25+ sources), longest AI generation time (most thoughtful messages), human review required (strategic importance), custom research additions (manual notes, special context), and multiple message variations tested (A/B test for optimal).

Volume: 20-50 prospects monthly per seat Time investment: 5-10 minutes human review per prospect Response rate: 20-30%

Tier 2 - Standard Personalization (Good-Fit Prospects, Moderate Signals):

For prospects scoring 60-79 with moderate signals: comprehensive automated research (20+ sources), standard AI generation (proven templates and patterns), selective human review (spot-check 20%), automated approval for routine cases, and proven message frameworks.

Volume: 100-150 prospects monthly per seat Time investment: 2-3 minutes human oversight per prospect Response rate: 12-18%

Tier 3 - Light Personalization (Okay-Fit Prospects, Low Signals):

For prospects scoring 40-59 with weak signals: core automated research (10-15 key sources), efficient AI generation (faster, tested patterns), minimal human review (exception cases only), automated approval standard, and focus on volume efficiency.

Volume: 200-300 prospects monthly per seat Time investment: <1 minute human involvement per prospect Response rate: 6-10%

Total Blended Performance:

Across all tiers: 320-500 prospects contacted monthly per seat (10-15x manual capacity), blended response rate 12-18% (maintains quality), human time investment 40-60 hours monthly (vs. 160 hours pure manual), and 50-80 meetings booked monthly per seat (pipeline generation).

How Valley Identifies Which Prospects Deserve Deepest Personalization:

Strategic resource allocation maximizes ROI:

ICP Fit Score:

Prospects matching your ICP precisely (80-100 score): get maximum personalization investment, highest likelihood of conversion, and worth extra human attention.

Prospects partially matching (60-79): standard personalization sufficient, good conversion probability, and automated quality adequate.

Prospects marginally matching (40-59): light personalization only, lower conversion likelihood, and efficiency prioritized over depth.

Signal Strength:

Multiple high-intent signals (pricing visit + 3 profile views + post comments): indicate serious research, warrant deep personalization, and justify extra effort.

Single low-intent signals (one like): require basic acknowledgment only, automated standard message fine, and volume matters more than depth.

Account Value:

Enterprise accounts (500+ employees, high deal value): deserve deep personalization regardless of signal strength, strategic importance justifies investment, and relationship-building emphasis.

SMB accounts (sub-50 employees, smaller deals): efficient personalization appropriate, volume drives pipeline more than depth, and automation-first approach.

Competitive Context:

Active vendor evaluation (engaging multiple competitors): requires differentiated personalization, competitive positioning critical, and human strategic input valuable.

Early research (single vendor content engagement): standard personalization sufficient, not yet comparing options, and relationship building focus.

How Valley Maintains Message Quality Across Volume Increases:

Scaling without quality degradation requires systematic controls:

Quality Metrics Dashboard:

Valley tracks quality indicators: response rate by campaign (quality correlates with responses), message approval rate (AI generating acceptable quality), edit frequency (how often humans change AI output), negative response rate (spam/angry responses signal quality issues), and meeting booking conversion (ultimate quality measure).

Quality Degradation Alerts:

Automated warnings when: response rate drops >20% week-over-week (quality issue?), negative responses spike >2% (messaging problems?), approval rate falls <70% (AI confidence declining?), or meeting conversion decreases (lower-quality conversations?).

Continuous AI Training:

Valley's personalization improves over time: learns from every approved message (what works), incorporates edits (what humans change), adapts to response patterns (what generates replies), and evolves with your voice (stays current as style changes).

Month 1 quality ≠ Month 6 quality—continuous improvement.

Sample Quality Audits:

Regular quality checks: monthly review of 20-30 sent messages (spot-check quality), evaluate personalization depth (specific enough?), assess natural language (sounds human?), and identify improvement opportunities (patterns to address).

How Valley Users Scale Progressively:

Successful scaling follows phased approach:

Phase 1: Quality Foundation (Months 1-2):

Start with deep personalization, low volume: 20-30 prospects weekly, manual approval of all messages, focus on AI training and voice matching, and establish quality baseline and benchmarks.

Goal: Achieve 15-20% response rate with strong personalization.

Phase 2: Selective Automation (Months 3-4):

Introduce tiered personalization: maintain deep personalization for top tier (20-30 weekly), add standard personalization tier (50-75 weekly), total volume: 70-105 weekly, and selective automation (top tier manual, standard tier auto-approved after review).

Goal: Maintain 12-18% blended response rate at 3x volume.

Phase 3: Volume Scaling (Months 5-6):

Expand all tiers: deep personalization (30-40 weekly), standard personalization (80-100 weekly), light personalization (100-150 weekly), total volume: 210-290 weekly, and mostly automated (manual review exceptions only).

Goal: Maintain 10-15% blended response rate at 8-10x original volume.

Phase 4: Optimized Scale (Month 7+):

Mature operation: deep tier (40-50 weekly), standard tier (100-150 weekly), light tier (200-300 weekly), total volume: 340-500 weekly, and fully automated with strategic spot-checks.

Goal: Sustain 12-18% response rate at 12-15x original volume.

What Results to Expect from Valley's Scaled Personalization:

Performance metrics validate the approach:

Response Rate Maintenance:

Manual personalization baseline: 15-20% response rate, 50-100 prospects monthly.

Valley scaled personalization: 12-18% response rate (15-20% decline acceptable for 10x volume), 400-500 prospects monthly, and net result: 5-8x more total responses despite slightly lower percentage.

Example: 20% of 80 manual = 16 responses vs. 15% of 400 Valley = 60 responses.

Meeting Volume:

Manual approach: 50-80 monthly prospects → 15-20% response → 8-12 responses → 40% book → 3-5 meetings.

Valley scaled: 400-500 monthly prospects → 12-18% response → 60-75 responses → 40% book → 24-30 meetings.

Result: 6-8x more meetings booked monthly.

Time Investment:

Manual approach: 160 hours monthly (full-time resource), 3-5 meetings generated, 32-53 hours per meeting.

Valley approach: 40-60 hours monthly (research eliminated, approval only), 24-30 meetings generated, 1.3-2.5 hours per meeting.

Efficiency improvement: 20-40x better time utilization.

Pipeline Quality:

Despite volume increase: similar qualification rates (60-70% of meetings qualify), similar close rates (25-35%), and similar average deal sizes.

Quality maintained because personalization quality maintained.

Best Practices for Scaling Without Sacrificing Quality:

Successful scaling requires discipline:

Start with Quality, Then Scale:

Don't sacrifice quality for volume prematurely: establish personalization baseline first, train AI thoroughly on your voice, prove response rates with deep personalization, and then gradually increase volume while monitoring quality.

Monitor Leading Indicators:

Track quality metrics continuously: weekly response rate tracking (early warning system), monthly quality audits (comprehensive check), prospect feedback (what they say about messages), and team observations (reps' quality assessment).

Maintain Tier Discipline:

Don't let volume pressure corrupt tiering: best-fit prospects always get best personalization, resist temptation to auto-approve everything, and preserve human review for strategic accounts.

Continuous Improvement Focus:

Even at scale, keep improving: monthly AI training updates (incorporate learnings), message testing (A/B test variations), ICP refinement (focus on responders), and process optimization (eliminate waste).

Accept Volume Limits:

There IS a maximum quality-preserving volume: 400-600 prospects monthly per seat typically maximum while maintaining 12-18% response rates, beyond that, quality degrades meaningfully, and additional volume generates diminishing returns.

Better to hit limit and add seats than exceed limit and damage quality.

Valley's personalization architecture proves that scale and quality aren't mutually exclusive, through comprehensive automated research, sophisticated AI message generation, tiered personalization strategies, and continuous quality monitoring, teams achieve 10-15x volume increases while maintaining response rates within 15-20% of pure manual personalization, fundamentally transforming LinkedIn outreach economics from labor-intensive craft to scalable, systematic pipeline generation.

Related Blogs

FEATURED READ

5 min

Pricing Psychology for Early-Stage SaaS Founders: What Your Price Is Actually Communicating

Read

Read

FEATURED READ

5 min

LinkedIn's New 360Brew AI Algorithm: Why Your Reach Dropped 47% and What to Do

Read

Read

FEATURED READ

5 min

LinkedIn Automation Safety 2026: What HeyReach's Ban Means for Your Account

Read

Read

FEATURED READ

5 min

The 10K-Email Tax: What Spray-and-Pray Outbound Actually Costs You

Read

Read

Which channels does Valley support?

Valley supports LinkedIn outreach, including connection requests and InMails. Valley users safely send 1000-1200 messages per seat every month.

How safe is it and does Valley risk my LinkedIn account?

Do I have to commit to an Annual Plan like other AI SDRs?

How does Valley personalize messages?

VALLEY MAGIC